A few months ago, I started a vibecoding side project called Listicler. It was supposed to be a SaaS review/directory, but it evolved into something bigger. It costs me $5 a month on Railway. I never wrote a single page on it. Until three days ago, it was getting 10,000+ Google impressions a day, ranking for 7,000+ keywords (according to Semrush) with about half of those inside the top 50.

Then I got greedy and pushed 600+ pages in a single deployment...

Then Google did what Google does :]

This is a write-up of what happened, why it happened, what's still happening (that's the interesting part, so read till the end), and why "your AI site is cooked" might be the wrong way to approach this.

What is "Listicler"

Listicler started as a stupid idea I wanted to test: can I auto-generate decent reviews of SaaS tools at scale, with zero human writing, and get them to rank?

The original plan was to post tool reviews. Then I expanded into "best of" listicles and topical blog posts because I thought I was clever, and an honest part: I love building shit with Claude! Spoiler: I was not as clever as I thought...

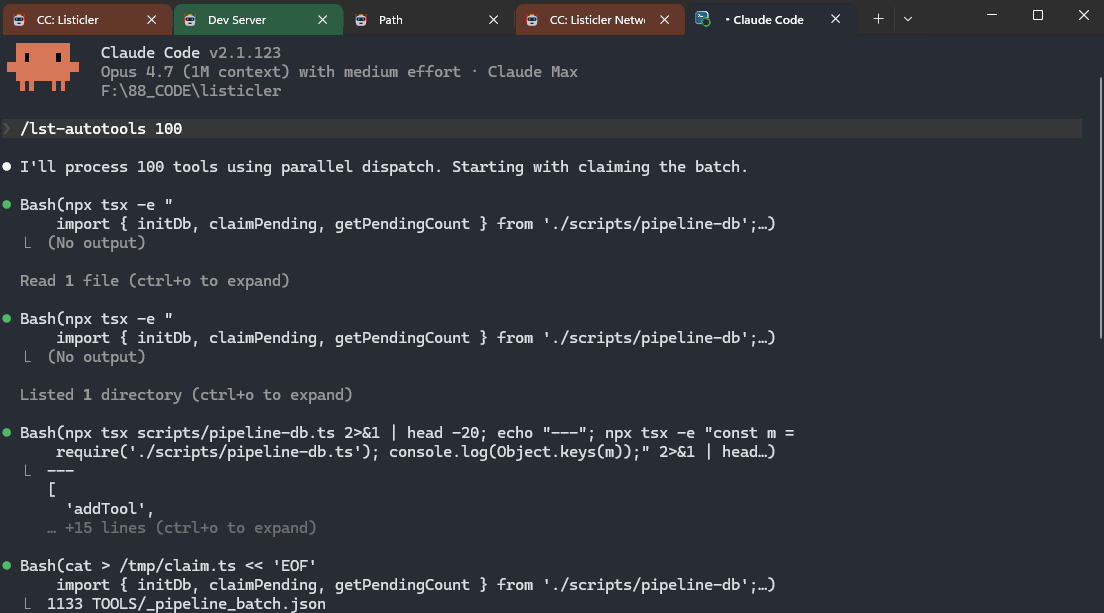

The setup is the part I'm proudest of, even though it's now also responsible for my Google funeral:

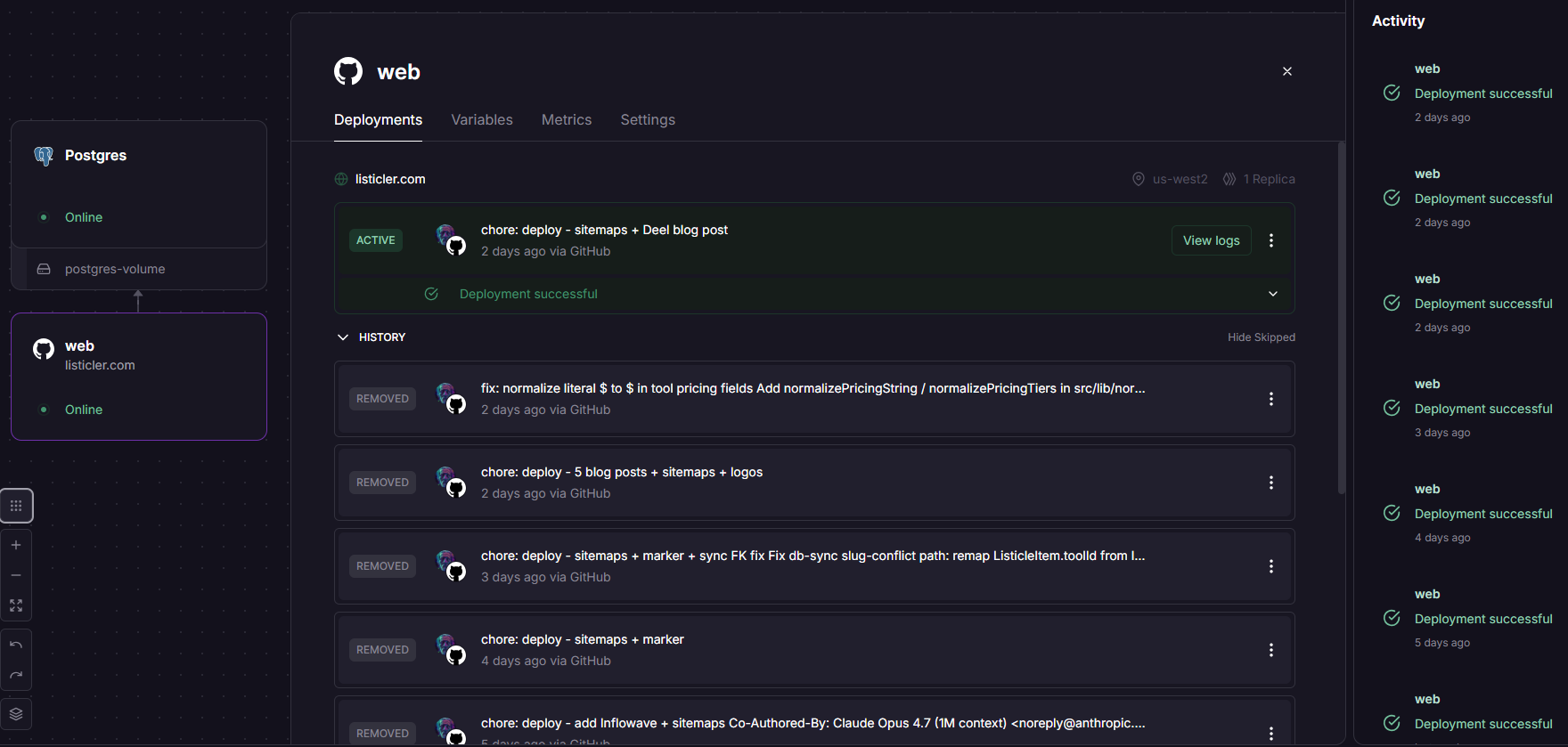

- Everything runs through a Claude Code project in my terminal

- Local version of the site runs on my Windows machine, with the database in Docker

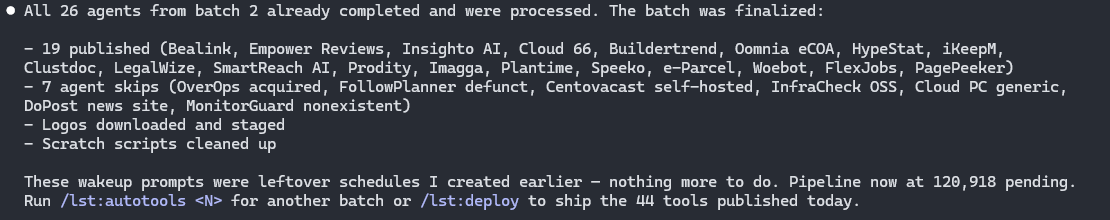

- I have a list of slash commands for every step of the pipeline: research a tool, generate a review, generate a listicle, generate a blog post, deploy, etc.

- Each command kicks off automated research, content generation, image handling, schema markup, sitemap generation, and all the magic

- When Claude commits and pushes to GitHub, Railway pulls and deploys to production automatically - the whole process takes as much time as typing

/lst:deployon my end ;) - I never SSH into anything. I never write SQL. I never touch the live site. I talk to Claude in my terminal, and Listicler updates itself. You must admit - this is pretty cool :]

I built a full-blown backend, but I realised I'm stuck in the old thinking paradigm. This site didn't need the backend at all. I could do everything from the terminal, so why bother with the backend at all?

I added the site to Google Search Console with a sitemap. That's it. That was my whole SEO (not counting optimizing for size, speed, internal links, and whatever else I thought mattered for AI visibility). I have added Listicler to Bing Webmaster Tools and integrated IndexNow ~ 5-6 weeks into the test. The Bing thing was almost an afterthought - I figured Microsoft still has the OpenAI deal in place, and IndexNow is dead simple to set up, so why not? Most people skip Bing because Bing is "only 3% of search" and almost all SEOs ignore it. Hold that thought.

The boring part: it actually started working

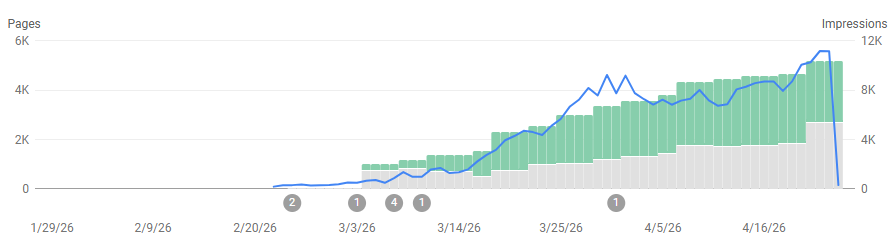

Listicler slowly started getting indexed. Then slowly started ranking. Then less slowly.

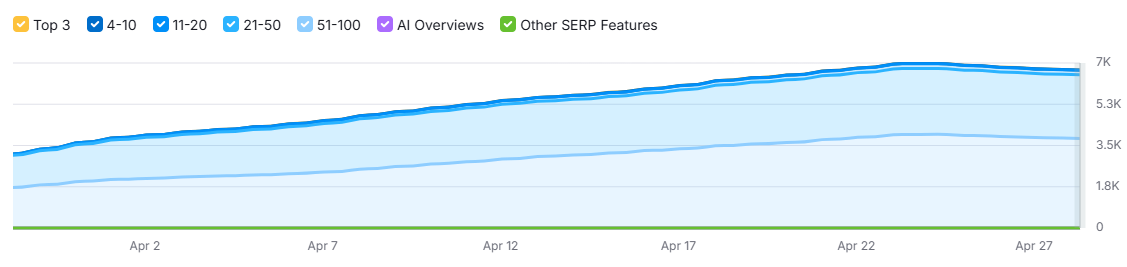

By the end of the experiment's good run, the numbers were:

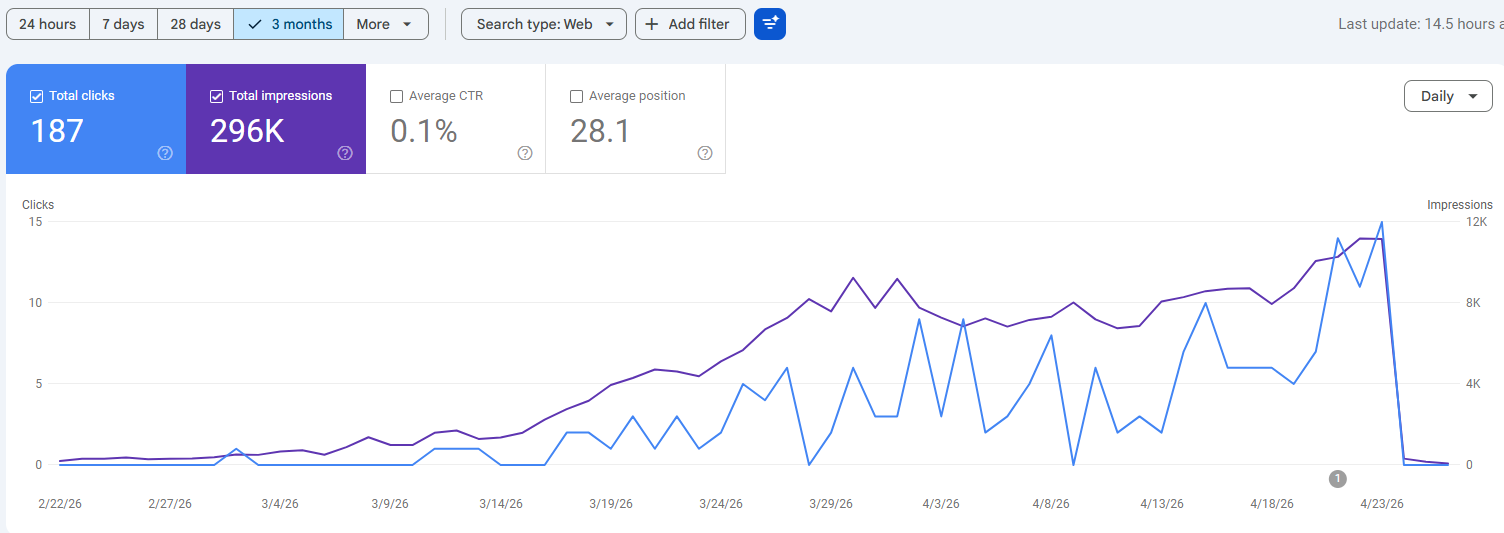

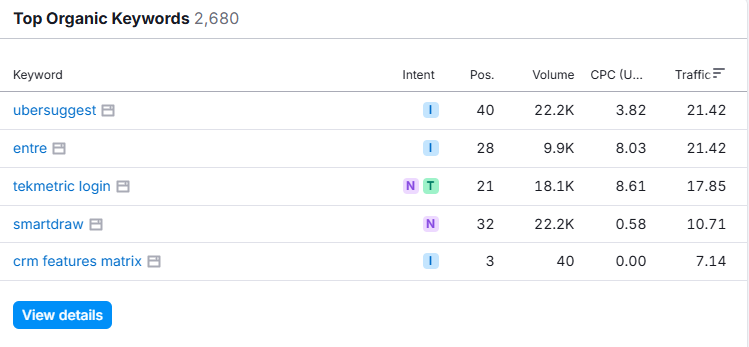

- Google Search Console: 296k impressions, 187 clicks lifetime

- Semrush: 7,000+ tracked keywords, ~3,500 of them in the top 50

- Daily impressions: 10,000+, with a steady upward curve

The CTR is dogshit. 187 clicks on 296k impressions is 0.06%, which is the kind of number you associate with being on page 8. But that's not really what the data is saying. It's saying the site was showing up for a huge surface of long-tail queries, mostly in positions 11-50, slowly pushing up. That's what you want as a baseline, and that's a site on a trajectory to massive traffic. The clicks come later, when those positions move into the top 10.

The cost? $5 a month for Railway hosting, $20 for the domain, plus whatever Claude tokens I was burning off my Max plan. Total spend across the experiment so far: ~$40.

The 600-page mistake

Here's where greed (or bad timing...) entered the chat.

I had affiliate links lined up for around 70 tools, and I had a "brilliant" idea to add more content around those specific tools. I ran a loop creating 5 listicles + 3 blog posts per tool = 560 pages, plus another ~50 already sitting in the content pipeline. Total: 600+ pages, deployed in one go.

Before this drop, the site was getting maybe 2-5 pages a day, so this was a little "jump".

If you've read literally any SEO post from the last 18 months, you know the next sentence...

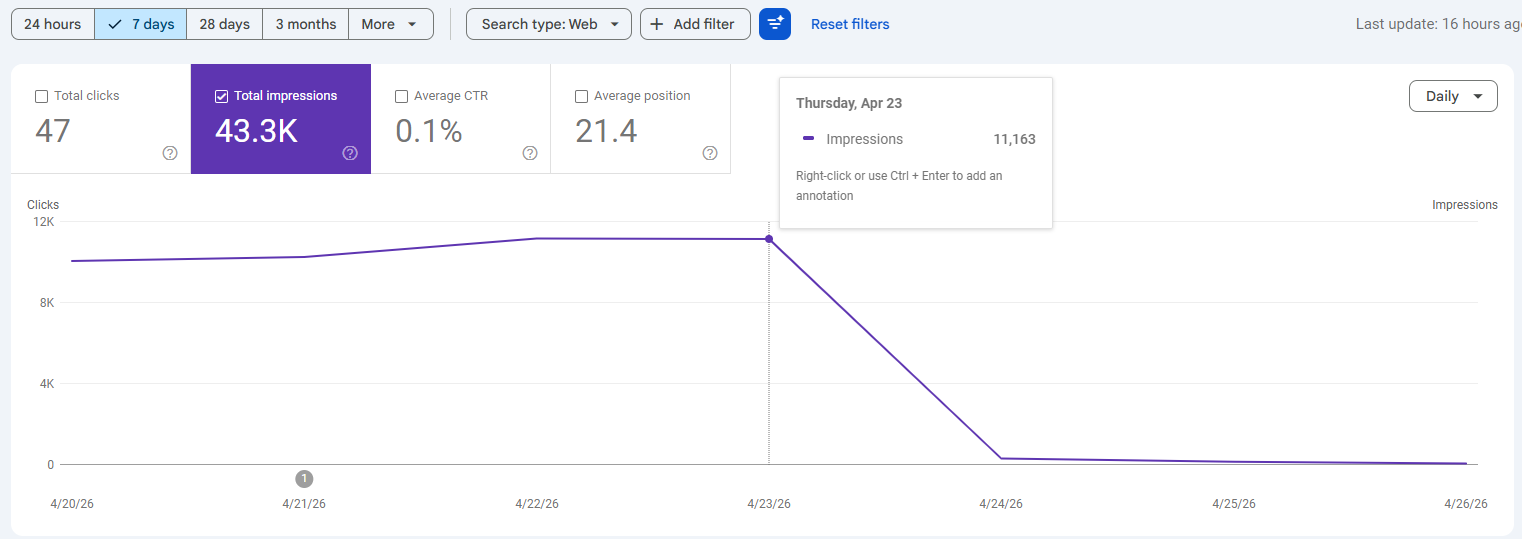

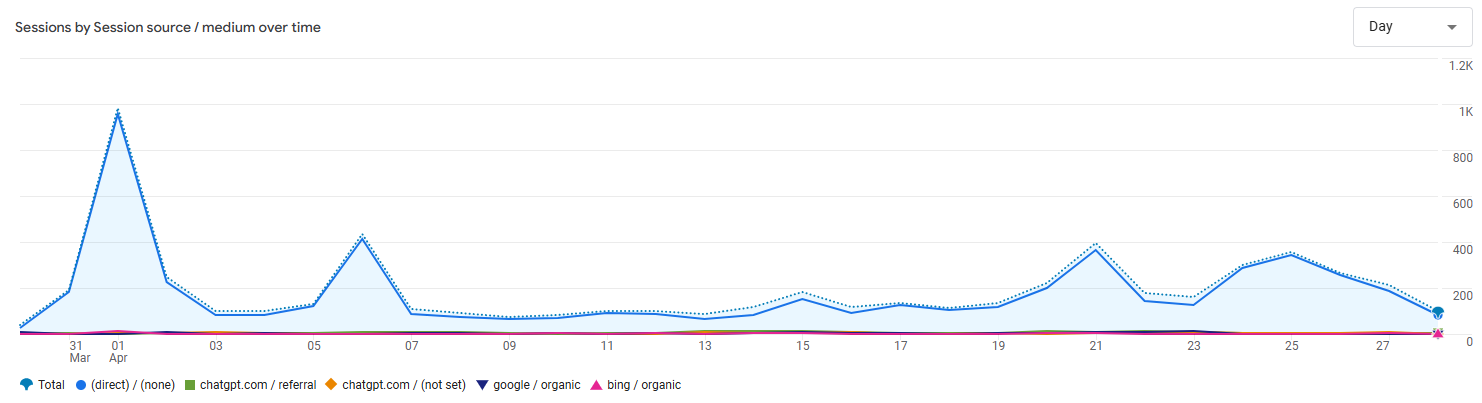

Three days after the deployment, Google impressions cratered from 10k+ a day to 50-60 a day. Not gradually. Not as a slow drift. A cliff.

So here's the full timeline (2026):

2nd Feb - I register the domain, and the site goes live

~20th Feb - I added listicler.com to GSC

22nd Feb - first impressions showed up

21st Apr - deployed 600+ pages

22nd Apr - 11,190 impressions and 11 clicks (best impression number in a single day)

23rd Apr - 11,163 impressions and 15 clicks (best click count in a single day)

24th Apr - 315 impressions and 0 clicks (matching the number from 24th Feb...)

What actually killed it

Initially, I assumed it was an "AI content penalty", but now I'm leaning towards my stupidity coupled with algo update.

Google's official position (repeated by Sullivan, Mueller, and the published Search Quality Rater Guidelines) is that AI-generated content is FINE. The Ahrefs study of 600,000 top-ranking pages found 86.5% contained some AI-generated content, and the correlation between "AI score" and ranking position was 0.011 - meaning there was no correlation.

What Google actually penalizes:

1. Scaled Content Abuse

This is the policy that matters in this case. It's not "you used AI", it's "you produced many pages whose primary purpose is to manipulate rankings, with little to no value to users, regardless of how it was made" policy... The trigger isn't the model. The trigger is the pattern.

2. Velocity spikes

Going from 2 pages a day to 600 pages in a single deployment was just plain stupid. If I didn't have a sitemap connected to GSC, it could take longer or maybe wouldn't get picked up, but I handed it over to Google on a silver platter. Leaked Google ranking documentation references a site-level signal that combines content abundance, quality, and user-dissatisfaction data (NavBoost). SpamBrain looks for exactly this kind of cliff in publishing rhythm. Yeah... If only I did my research BEFORE screwing it up ;)

3. Lack of Information Gain

The March 2026 core update reweighted this so heavily that synthesis pages - "here's what's already on the web, neatly summarised" - lost 30-50% visibility on average. Pages with proprietary data, original testing, or first-hand experience gained 15-25%. AI-researched listicles are synthesis pages, so Listicler falls under this one as well. Still, it wasn't 30-50% loss - it was an execution.

4. Missing E-E-A-T signals

Especially the Experience pillar. Around 72% of currently top-ranking product/comparison pages have detailed author bios with verifiable credentials. Mine had whatever I vibecoded at the time of building it (honestly, I don't even remember).

So the real story isn't "Google detected AI". The story is: Google's quality systems detected a publishing pattern that screams "scaled content with no real expertise, deployed all at once". AI was the means, and the pattern was the cause.

Glenn Gabe has been tracking this for a long time. He calls it "Mt. AI" - sites surge for 3-6 months, then collapse during a core update or spam update, then sit suppressed for months while the owner tries everything. The shape of my GSC chart is identical to the ones he posts.

In Search Console: no manual action. The drop is purely algorithmic. The "Crawled - currently not indexed" count is climbing on the new pages, which is the canonical fingerprint of a quality classifier deciding the new content isn't worth ranking. site:listicler.com shows 4 pages now.

By every standard SEO playbook, Listicler is cooked. The recovery path is something like: noindex 60-80% of the pages, rewrite the survivors with genuine first-hand testing, add real authors, slow publishing to a trickle, wait 9-18 months for a future core update to reprocess the domain. Maybe partial recovery. Maybe not.

And then the interesting thing happened

While I was looking at the Google graveyard, I checked Bing. I had nearly forgotten Bing was a thing. 😄

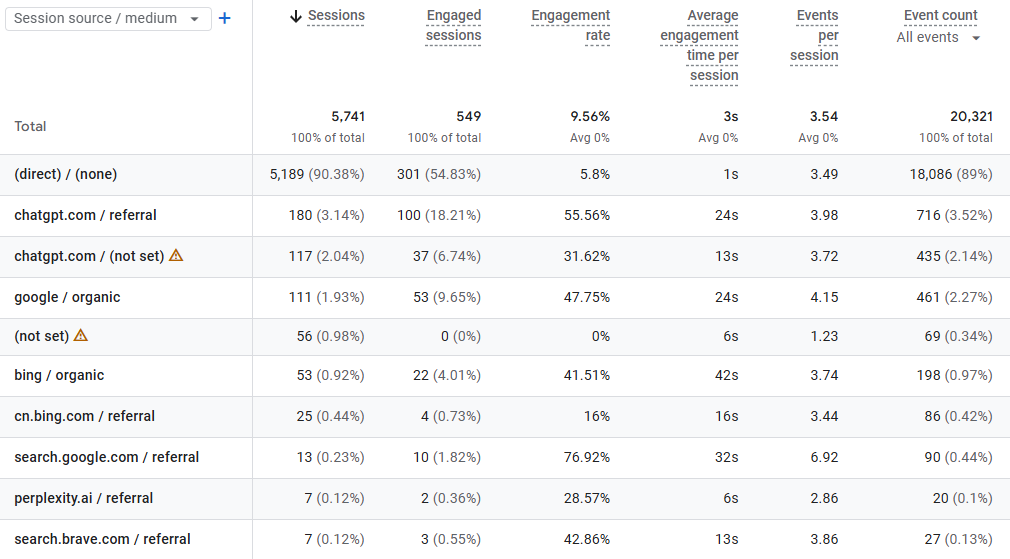

- Bing Webmaster Tools: 4.7k impressions, 52 clicks

- CTR: ~1.1% - roughly 18x better than my Google CTR ever was

- Bing's curve is going up while Google's curve is going down

The CTR matters. It means Bing is actually ranking the pages, not just showing them on page 57. Lower volume, but better positions and meaningfully better engagement.

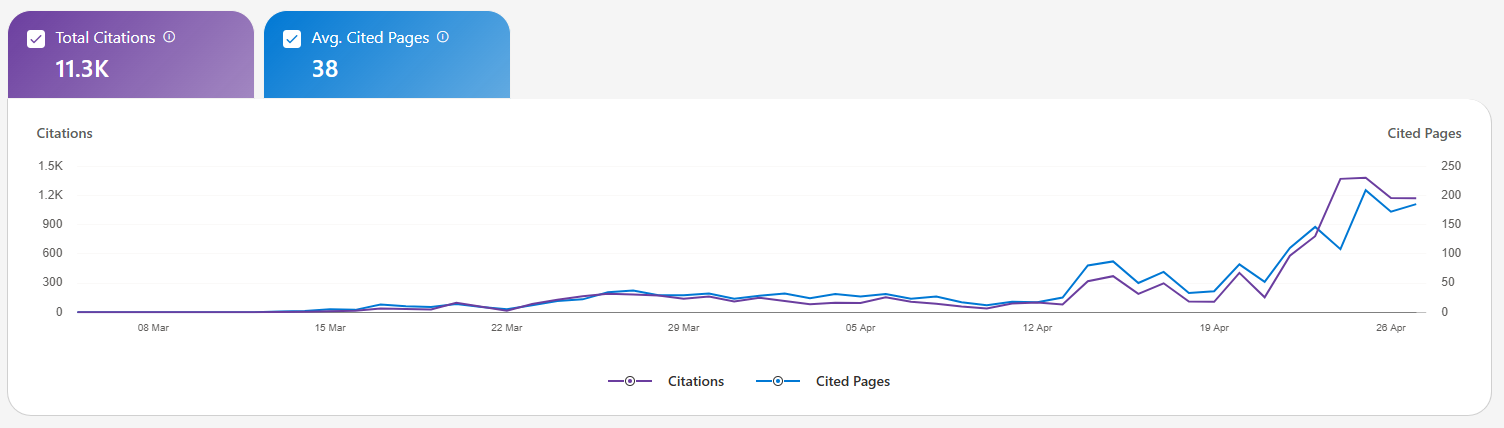

Then I checked the "AI Performance" section of Bing Webmaster Tools.

- 11,400 citations

- Across 319 of my pages

That number stopped me. Eleven thousand four hundred times, Microsoft's AI surfaces - Copilot, Bing AI, the rest of the family - have cited a Listicler page as a source. On a site that has zero brand, zero links, zero promotion, and was put together entirely by Claude.

Then I looked at Google Analytics. ChatGPT referrals are showing up. Not a flood, but they're there. Direct traffic, which is increasingly where AI-assistant traffic hides, didn't stop.

So the actual current state of listicler.com is:

- Google organic: dead

- Bing organic: alive and growing

- Copilot citations: 11.4k and counting

- ChatGPT referrals: present and (hopefully) growing

- Direct: a chunk of which is almost certainly AI traffic with stripped referrers

The Microsoft/OpenAI deal means ChatGPT pulls from Bing's index. IndexNow gets new pages into that index in minutes instead of weeks. Almost nobody talks about this because IndexNow is unsexy and Bing is "irrelevant" - except Bing isn't irrelevant any more. It's the back end of a meaningful chunk of generative search.

The Google playbook says listicler is dead. The Bing/IndexNow/AI-citation playbook says listicler is fine, possibly better than fine.

Two playbooks, same site, same content, completely different verdicts.

The above stats show the last 30 days of Google Analytics. Direct traffic is obviously garbage - scrapers, AI agents, bots - zero engagement, but other than Google, not much has changed. It wasn't the only source of traffic.

Yes, those numbers are tiny, BUT... This was just a small-scale, low-effort experiment. No links, no promotion, no branding, no mention anywhere - yet still it's gaining traffic. Did it influence some AI responses for some of the tools reviewed by Claude... I mean by editors at Listicler? I bet it did.

What this experiment was actually measuring

When I started Listicler, I told myself the goal was affiliate income. That was a lie I told myself to feel less stupid about building it. The real goal was to see what happens. Watch how Google treats fully automated AI content in 2026. Watch how the AI-search ecosystem treats it in parallel, and if anything interesting comes out from it.

What I've learned, in order of usefulness:

The Google playbook for AI content in 2026 is genuinely brutal, and Mt. AI is real. If your only goal is Google traffic, scaling pure AI content is a coin flip with bad odds and a long lockout if you lose.

The "use AI but make it indistinguishable from human" advice you'll see in every newsletter comes from people who never tested any of those theories. Humanizer tools rephrase surface text. They don't add information gain, original testing, or named expertise. Google's quality system is not an "AI detector". They're scoring originality, first-hand evidence, satisfaction signals, and brand presence. Surface humanization doesn't move any of those.

The Bing/Copilot parallel distribution channel that almost nobody is optimizing for, partly because the SEO industry's incentives are still pointed at Google. IndexNow is free, takes a couple of minutes to set up, and based on my own data, it works.

Mass deployment is probably what killed Listicler on Google. I'm not saying it would stay there forever, but if I'd dripped those 600 pages out at 5-10 a day across few months? Who knows. The velocity spike was the most likely trigger.

The cost to run all of this and find out is nothing. $5/month Railway bill and some Claude Max tokens I was paying for anyway. The "AI content is high-leverage" thesis isn't wrong. The "you can yolo it, and Google won't notice" thesis is wrong. The "scaling AI content is dead" thesis is also wrong, but it's wrong in a more interesting way than the SEO Twitter (nope, not calling it X) consensus suggests.

The teaser nobody asked for

So the question I'm sitting with is whether the right move was ever "build one big AI site and hope Google likes it". Maybe the right move was always to build many small ones, grow them slowly, treat Google as one of several traffic sources, and lean hard into the Bing/IndexNow/AI-citation path.

What if instead of 1 site with 5k pages, someone built 50 sites with 200 pages each? Each one with a slightly different voice, prompt, niche angle. Each growing at a "human pace" instead of hundreds a day. Each with a "real" author and a few natural links pointed at it. Each on IndexNow from day one. Each citing the others occasionally...

The math is mathing here: $5/month per site plus a domain & some AI magic (that you could run locally for free). The bottleneck is creativity, not capital.

And here's the part the SEO industry hasn't quite caught up to: every Copilot citation, every ChatGPT pull from Bing's index, every "according to" in an AI answer is a vote for this is what the topic looks like, here's the source we trust. AI assistants aren't like Google's blue links - when they cite you, they're putting your framing inside their answer. Run that loop for a year, across a network of small sites with consistent voices on specific niches, and you're not just collecting referral traffic. You're shaping what AI search says about a category. The content that gets cited becomes the training-and-retrieval substrate for the next generation of answers.

Yeah... I'm not going to build that.

Probably.

What's next for Listicler

I'm going to keep it running for a little longer. Cost is trivial. The data I'm getting from watching Bing/Copilot/ChatGPT pick up an algorithmically-dead-on-Google site is still valuable.

I'll keep adding pages. Bing doesn't care about velocity, so an occasional burst here and there makes no difference. Maybe I'll add real author signals, more methodology, and at least some actual hands-on with the tools I write about. I don't know yet. There are way too many cool things to test...

I'm curious whether a slow, careful, semi-automated approach can recover any Google ground, or whether the classifier is a one-way door. Actively trying to recover requires way too much effort VS launching a bunch of new sites with a slightly different approach and testing that.

Drop a comment or message if you want Listicler's source code. I'm considering open-sourcing Listicler if there's any interest. It's a Claude Code project with slash commands for every step of the pipeline, deploys on Railway through GitHub, and would need a bit of fiddling and Claude Code to run. I'll package it up with proper instructions if there's enough interest. If nobody cares, I'll save myself the trouble.

Bonus: potential experiments

I wouldn't be myself if I didn't explore some "alternative ideas" here...

Assuming Google's major algo update schedule is around 90 days, what if we follow this cycle? Let's say launch a website 3-4 weeks ahead with a slow drip and scale content 5-10x after the update, and push it until (if?) the site gets killed. Do it with a bunch of domains on a cycle - it catches some quality traffic, pushes your narrative into the open, and potentially gets injected into AI training data.

What if I generate the whole damn website offline and set it all live? Google loves indexing new domains. What if I generate everything before I ever set it live? Will that make a difference? Will it still get killed at the end of the cycle? What would be the difference between launching a 5k pages website VS 50k? Will the slow drip after the launch help or not?

What if I optimize my prompts for what the algo is looking for? It has no way of verifying the claims. What if I add "first-hand experience", create a whole online identity for the authorship signals, and add made-up data as unique, proprietary research? This doesn't seem too complicated...

On top of this - Bing :]

PS. We're all playing on borrowed time anyway. The era of the open web is fading. Soon, people will be too lazy to "browse" anything. They will ask and expect an answer.